A quick overview

- Canada has played a key role in shaping modern AI, particularly in deep learning, machine learning, and ethical AI research.

- Geoffrey Hinton co-created AlexNet and helped launch Canada’s deep learning ecosystem, later joining Google and advocating for ethical AI.

- Yoshua Bengio founded MILA and advanced NLP techniques that underpin models like GPT and BERT, cementing Canada’s global AI reputation.

- Richard Sutton transformed reinforcement learning, enabling AI to learn through trial and error—impacting robotics, gaming, and automation.

- Institutions like MILA, Amii, and the Vector Institute have made Canada a global hub for AI talent, research, and innovation.

Introduction

Over the years, Canada has played a pivotal role in shaping artificial intelligence, laying the groundwork for groundbreaking research and establishing world-class AI institutions. With government-backed investments, a thriving tech ecosystem, and an unmatched talent pool, Canada’s influence on AI continues to grow- a legacy set in motion by the country’s own AI founding fathers.

Canada’s AI contributions have been most notable in deep learning, machine learning, and ethical AI research- fields that have completely redefined how machines interact with the world. These advancements wouldn’t have been possible without the pioneering work of Canadian researchers whose discoveries transformed AI from theory into reality.

The Pillars of AI: The Canadian Minds That Made It Happen

These researchers challenged conventional thinking and revolutionized artificial intelligence with their insights into deep learning, neural networks, and reinforcement learning. Their breakthroughs led to the AI-powered tools and technologies we use every day, from chatbots and recommendation algorithms to autonomous systems and voice assistants.

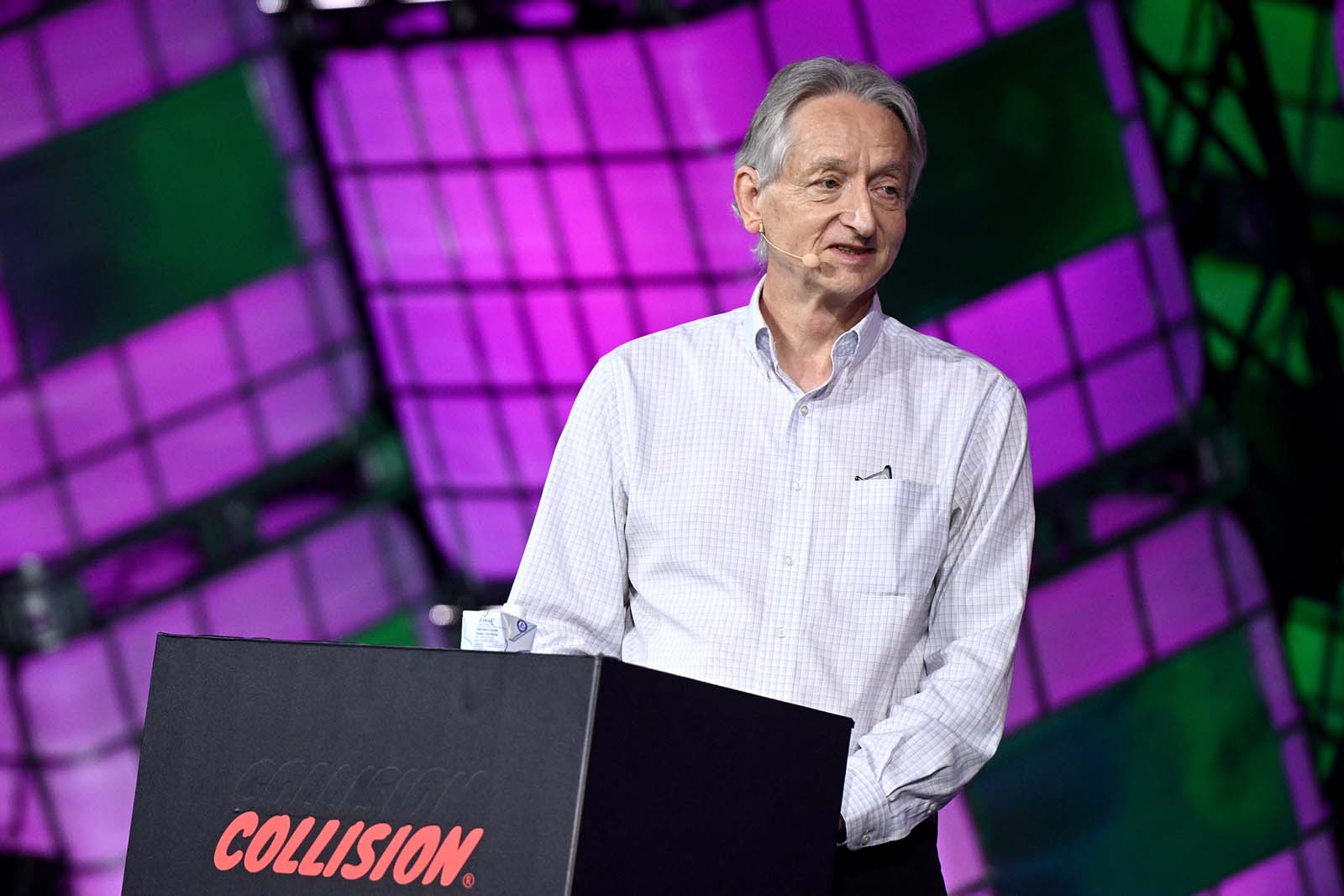

Geoffrey Hinton

Photo by Collision Conf © Collision Conf, used under CC BY 2.0. No changes made.

Early Life & the Birth of Deep Learning

Born to Howard Everest Hinton, an accomplished entomologist, Geoffrey Hinton was destined for academic success. His family tree included several intellectual greats- Charles Howard Hinton, a mathematician famous for visualizing higher dimensions; George Everest, the surveyor after whom Mount Everest is named; and George Boole, the originator of Boolean logic, which serves as the basis of modern computing.

Geoffrey Hinton started with a degree in experimental psychology from Cambridge, then took a deep dive into AI at Edinburgh, where he explored neural networks mimicking human brain activity. His postdoc at UC Berkeley pushed him further into this field. Later, at Carnegie Mellon, he teamed up with David Rumelhart and Ronald J. Williams to co-develop backpropagation, the game-changing algorithm that powers modern deep learning. By enabling neural networks to fine-tune themselves through gradient descent, backpropagation became the foundation of today’s AI revolution.

Building Canada’s Deep Learning Hub

In 1987, driven by his opposition to U.S. military funding and the Reagan administration, Hinton left the United States for Canada. As a professor at the University of Toronto (U of T), he continued his research for 11 years before taking a leadership position at the Gatsby Computational Neuroscience Unit at University College London in 1998. Returning to U of T in 2001, he further advanced neural network models and began exploring their practical applications, leading directly to the rise of deep learning technology.

From AlexNet to AI Ethics: Hinton’s Lasting Impact

In 2012, alongside his graduate students Alex Krizhevsky and Ilya Sutskever, Hinton created AlexNet, an eight-layer neural network designed to classify images from ImageNet, a massive online image database. AlexNet was a turning point for AI research, leading Hinton and his team to establish DNNresearch, which Google acquired in 2013 for $44 million. Hinton subsequently joined Google Brain, serving as a Vice President and engineering fellow.

In May 2023, Hinton stepped down from Google to speak openly about the potential risks of AI, citing concerns about misinformation and the impact of automation on the job market.

Throughout his career, Hinton has received multiple prestigious awards, including the David E. Rumelhart Prize (2001) and Canada’s highest honor for science and engineering, the Gerhard Herzberg Canada Gold Medal (2010). In 2018, he was awarded the Turing Award, often referred to as the “Nobel Prize of Computing,” for his revolutionary work on neural networks. His impact on deep learning was further recognized in 2022 when he received the Royal Society’s Royal Medal for his pioneering advancements in AI.

Yoshua Bengio

Photo of Yoshua Bengio by the International Telecommunication Union, licensed under CC BY 2.0. Source. No changes made.

Early Life & the Foundation of a Vision

Originally of Moroccan-Jewish descent, Yoshua Bengio moved to Canada when he was young, immersing himself in a world of curiosity and scholarship. He pursued a career in computer science, completing his bachelor’s, master’s, and Ph.D. at McGill University.

Bengio’s research into artificial neural networks began in the early 1990s, a time when the idea was widely dismissed. However, he remained convinced that machines could learn in ways that mimicked human cognition. After completing his Ph.D. in 1991, Bengio worked as a postdoctoral researcher at MIT before returning to Canada in 1993 to join the Université de Montréal.

During this period, Bengio explored representation learning, a concept that helped AI models develop internal representations of language, images, and data. His research into word embeddings became a cornerstone of natural language processing, enabling AI to understand relationships between words in context. His work also contributed to sequence-to-sequence learning, laying the foundation for transformer-based models, the architecture behind OpenAI’s GPT models and Google’s BERT.

MILA, Deep Learning, and Global Impact

In 1993, Bengio founded MILA (Montreal Institute for Learning Algorithms), which has since evolved into one of the world’s leading AI research institutes. Under his leadership, MILA became a global AI powerhouse, driving advancements in deep learning, NLP, and ethical AI. His contributions placed Canada at the forefront of AI research, alongside the Vector Institute in Toronto and the Alberta Machine Intelligence Institute in Edmonton.

In 2018, Bengio, alongside Hinton and Yann LeCun, received the Turing Award for their groundbreaking work in deep learning. Today, he remains one of AI’s leading voices, advocating for responsible AI development and regulation.

Richard Sutton

Photo by Steve Jurvetson, licensed under CC BY 2.0. Source. No changes made.

A recognized authority in the world of AI, Richard Sutton has made enormous contributions to AI, particularly in reinforcement learning. His Temporal Difference Learning algorithm transformed how machines refine predictions, influencing robotics, automation, and game AI. According to Lark’s AI glossary, Temporal Difference Learning involves the process of updating predictions based on the current and future values of rewards. With a focus on prediction errors, the algorithms modify their predictions as new information becomes available, leading to enhanced decision-making capabilities in AI systems.

After completing his Ph.D., Sutton worked at GTE Laboratories and AT&T Bell Labs before moving to Canada in 2003. There, he helped found Amii (Alberta Machine Intelligence Institute), turning Edmonton into a global hub for reinforcement learning research. His book, Reinforcement Learning: An Introduction, remains the definitive text on the subject.

Sutton’s work has shaped AI-driven decision-making in industries ranging from autonomous vehicles to predictive analytics. His essay, “The Bitter Lesson”, reinforced his belief that AI advances best through scale and computational power rather than handcrafted human rules. In 2024, Sutton was honored with the Turing Award alongside Andrew Barto for his contribution to reinforcement learning.

Conclusion

Canada’s influence on AI is undeniable. With a strong foundation in research, world-class institutions, and pioneering minds, the country continues to shape the future of artificial intelligence. As AI evolves, so does the need for ethical considerations and responsible development. Where does Canada’s AI leadership go next? The conversation is just getting started.

Sources

General AI History & Canada's Role

Geoffrey Hinton

- Schmidhuber, J. (2015). Deep Learning in Neural Networks: An Overview. Neural Networks, 61, 85–117.UK Patent Box Regime – gov.uk

- University of Toronto News. (2023). Geoffrey Hinton Resigns from Google to Speak on AI Risks

- Wired. (2018). The Three Godfathers of AI and Their Path to the Turing Award

- The Royal Society. (2022). Royal Medal: Geoffrey Hinton

Yoshua Bengio

- MILA (Montreal Institute for Learning Algorithms)

- Bengio, Y. (2009). Learning Deep Architectures for AI. Foundations and Trends in Machine Learning

- Turing Award Announcement. (2018). For Deep Learning Contributions. ACM

- McGill University. (n.d.). Alumni Profiles: Yoshua Bengio

Richard Sutton

- Sutton, R.S., & Barto, A.G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press.

- Amii (Alberta Machine Intelligence Institute)

- Sutton, R. (2019). The Bitter Lesson

- The Royal Society. (2022). Royal Medal: Geoffrey Hinton

- Turing Award Press Release (2024). Recognition for Sutton and Barto’s Reinforcement Learning Research

Supporting Concepts & Ethics

#ArtificialIntelligence #MachineLearning #DeepLearning #InnovationInCanada #AIResearch #CanadianTech #AILeadership #TechStrategy #RAndD #ResponsibleAI #YoshuaBengio #GeoffreyHinton #RichardSutton #InnovationFunding

If you're building AI-driven technology, exploring innovation incentives, or looking to align your R&D with Canada's emerging strengths, we're here to support your strategy.

Let’s talk about how your innovation can be fuelled by expert guidance and smart funding.

Contact Checkpoint Research today to explore how Canada’s AI legacy can power your next breakthrough.

8,500

Number of Projects

500M

Total Claim Expenditures

96.5%

Successful Claims

A quick overview

- Digital twins are revolutionizing industries by creating real-time virtual replicas of physical systems, enhancing decision-making and efficiency.

- Unlike traditional simulations, digital twins interact with their real-world counterparts, continuously updating and improving through data integration and AI.

- Digital twins are already transforming healthcare, infrastructure, manufacturing, and more, enabling predictive maintenance, optimized operations, and personalized medical treatments.

- The biggest challenge isn’t collecting data—it’s connecting and integrating vast amounts of data from different sources into a cohesive model.

- Businesses investing in digital twins gain a competitive edge, reducing costs, improving efficiency, and leveraging advanced analytics for smarter decision-making.

Adopting digital twin technology requires strategic planning, investment, and expertise, but the long-term benefits far outweigh the initial challenges.

Introduction

Businesses and researchers are increasingly turning to digital twins—virtual replicas of physical systems—to optimize performance, predict failures, and drive smarter decision-making. Unlike traditional models or simulations, digital twins continuously update with real-time data, enabling dynamic interaction between the physical and digital worlds.

From healthcare and infrastructure to manufacturing and energy, digital twins are revolutionizing industries by enhancing efficiency, reducing costs, and improving outcomes. However, implementing this technology at scale comes with challenges—particularly in integrating vast amounts of data from diverse sources.

This article explores what digital twins are, how they work, and why they’re becoming essential across industries. We’ll also highlight real-world applications, emerging trends, and how businesses can leverage digital twin technology for long-term success.

What is a digital twin?

A digital twin is a real-time virtual replica of a physical system, product, or process that continuously updates and interacts with its real-world counterpart. By leveraging data, AI, and IoT, digital twins provide insights, predictions, and optimizations that drive better decision-making across industries.

Digital twins differ from simulations – the most important difference being that digital twins can interact back and forth with its real physical counterpart, whereas a simulation or model feeds data to the model but the model cannot interact back

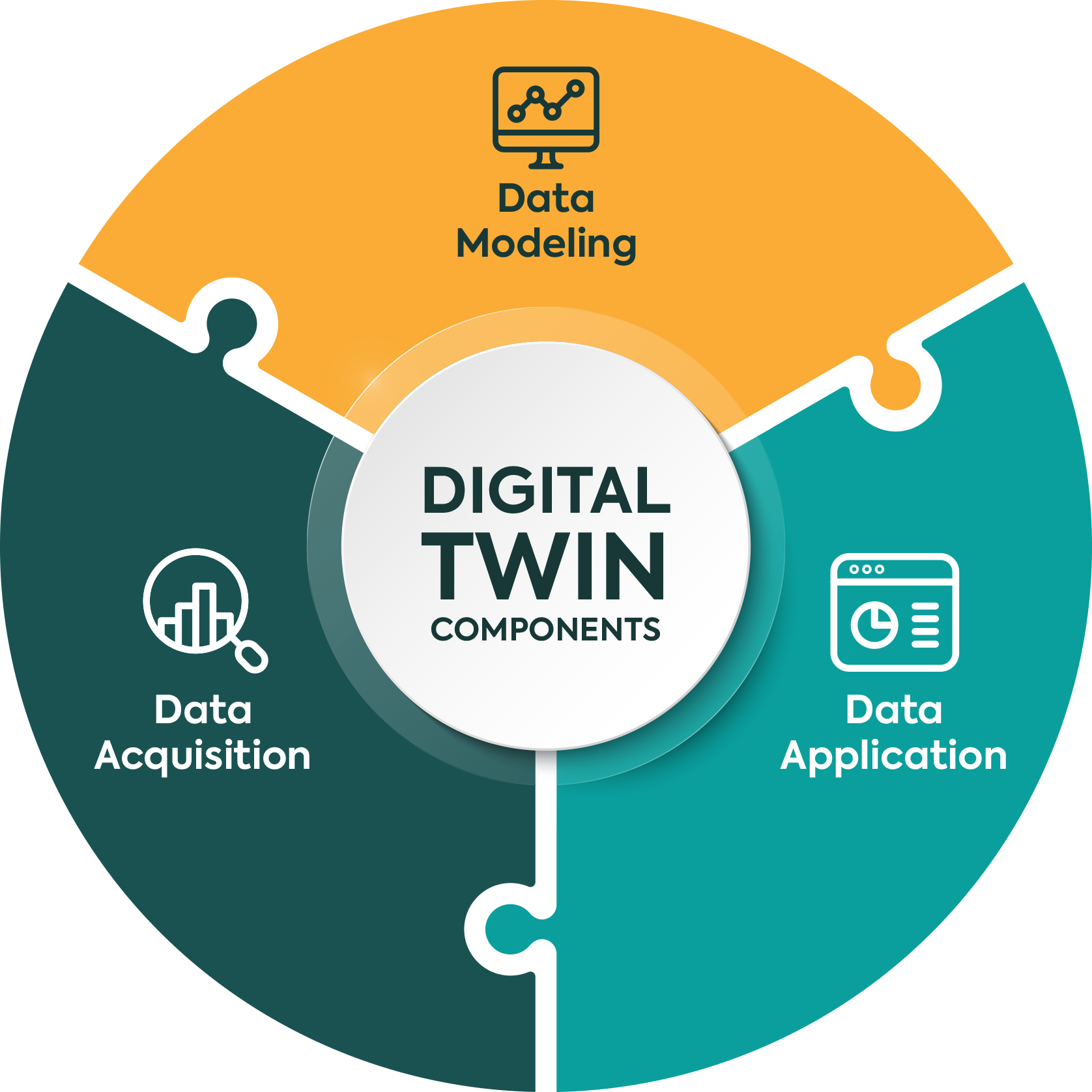

A fully functional digital twin relies on three core elements:

- Data Acquisition – Collecting real-world data through sensors, IoT devices, and other sources to provide accurate, up-to-date inputs.

- Data Modeling – Structuring and analyzing collected data to create a digital representation that mirrors the behavior, interactions, and conditions of the physical system.

- Data Application – Using the digital twin for simulations, predictions, real-time monitoring, and decision-making to improve performance, reduce risks, and optimize efficiency.

How it helps a vast number of industries

- Automotive & Transportation

- Agriculture

- Manufacturing

Amazon has created a platform, AWS IoT TwinMaker, to make the creation of digital twins more accessible to smaller operations. By utilizing the power of the Digital Twin, manufacturing companies can move from being reactive to predictive.

- Healthcare

- Aerospace

- Oil & Gas

- Energy & Utilities

By way of example, the municipal governments of Toronto and York Region are already using digital twins for real-time monitoring of wear and tear on their water infrastructure, so as to better allocate public resources.

The City of Toronto and York Region have collaborated on a project to create a digital twin of their water systems. This digital twin enables real-time monitoring and simulation, improving decision-making for infrastructure planning and maintenance. The technology is designed to enhance the management of water networks, ensuring more efficient operations and better service delivery to residents.

- Telecommunication

- Retail

- Infrastructure

In June 2024, Ontario’s government announced it is spending $5 million in digital twin technology to help build critical infrastructure faster and within budget. Testing will start with three major projects: the new Peter Gilgan Mississauga Hospital, the redeveloped Ontario Place and the western extension of the Eglinton Crosstown light-rail transit (LRT) line.

Digital twins map virtual models of physical assets in construction to help identify and mitigate problems early on. By testing the applications and benefits of this digital modeling technology, the government says it will not only save money and time, but also improve worker safety.

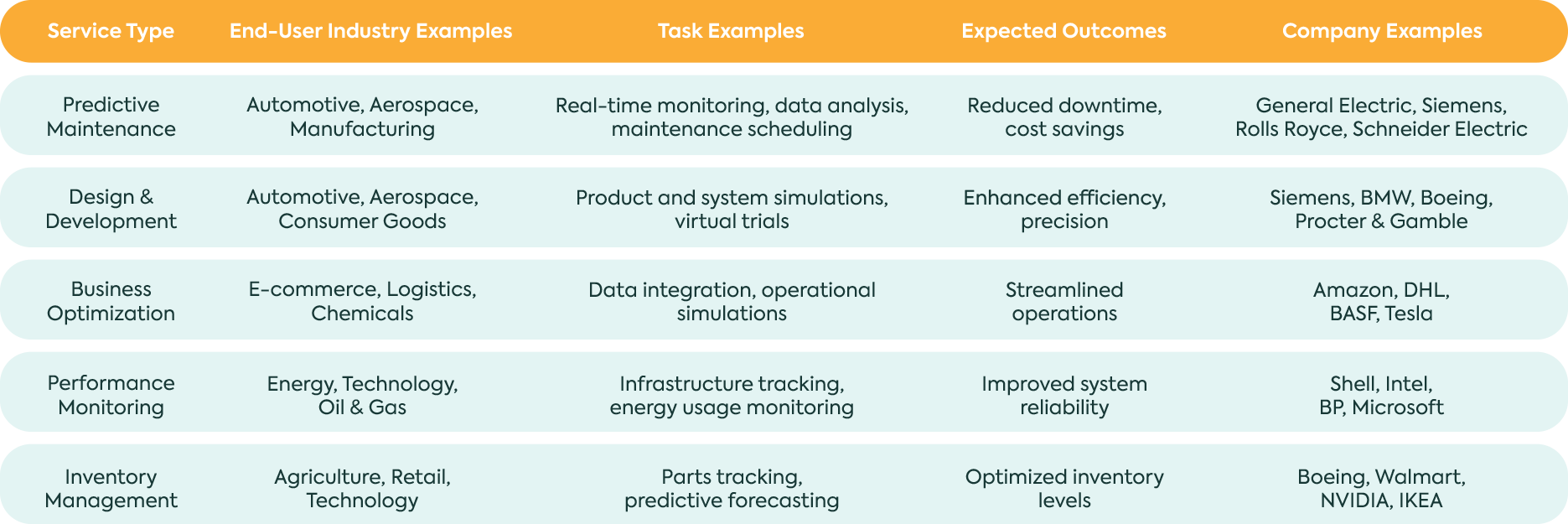

The Major Service Applications of Digital Twin Technology in 2023 with Industry and Task Examples

The main reason for slow integration, but why it’s growing now

- Digital twin has been conceptualized for a long time but today the infrastructure needed for data store or data algorithm analysis and ML systems have reached a level of maturity where we can accurately simulate the real world.

- Integrating digital twins requires significant investments and planning due to the technology’s complexity and the major shifts it demands in business operations. Many companies are still evaluating the costs and potential savings of digital twins, which necessitate a mix of advanced technologies and skilled personnel. This technological transition creates a skills gap and challenges industries to both adopt new technologies and find qualified workers to operate them efficiently

- The current acceleration is mainly made possible by the decreasing costs of technologies that enhance both IoT and the Digital Twin and rising interest in industries to reduce cost and improve supply chain operations. As a result, the market for Digital Twin technology was valued at $6.9 billion in 2022. However, it is expected to reach $73.5 billion by 2027 – a CAGR of more than 60 percent.

Conclusion

Digital twins are rapidly reshaping how industries operate, offering real-time insights, predictive capabilities, and enhanced efficiency. From healthcare and infrastructure to manufacturing and energy, this technology is unlocking new possibilities by bridging the gap between the physical and digital worlds.

While challenges like data integration and implementation complexity remain, the momentum behind digital twins is undeniable. As businesses and researchers continue to refine and expand their use, digital twins will play an increasingly critical role in innovation, problem-solving, and strategic decision-making—transforming the way we design, monitor, and optimize the world around us.

#DigitalTwins #Innovation #AIandData #SmartTechnology #FutureOfBusiness

Embracing digital twin technology can revolutionize your business operations by creating dynamic digital replicas of physical assets, enhancing efficiency and innovation. If you're interested in leveraging this cutting-edge approach, our team of experts is ready to guide you through the implementation process. Contact us today to explore how digital twins can transform your business.

8,500

Number of Projects

500M

Total Claim Expenditures

96.5%

Successful Claims

A quick overview

- AI is a broad concept encompassing tasks that require human intelligence, while ML is a subset of AI focused on developing algorithms for learning from data.

- AI seeks to create autonomous machine intelligence, while ML is the method used to achieve that goal.

- Limitations of AI and ML include struggles with common sense, creativity, emotional intelligence, and ethical decision-making.

- Common misconceptions about AI include complete autonomy, being a cure-all solution, and replacing human jobs.

- The future involves collaboration between humans and machines to leverage their respective strengths for innovation and progress.

Listen to it

Introduction

In the world of technological advancements, two acronyms have emerged and have made waves: AI and ML. As we stand on the brink of a new era, where these terms are a constant in our daily lives, it becomes imperative to dissect the complexities, understand the nuances, and demystify the potential and limitations of Artificial Intelligence (AI) and Machine Learning (ML).

Breaking it Down: What's AI and ML?

Artificial Intelligence, often referred to as AI, is a broad concept encompassing the development of machines capable of performing tasks that typically require human intelligence. This includes learning, reasoning, problem-solving, perception, language understanding, and even speech recognition. To put it simply, AI is the robot.

Machine Learning, a subset of AI, focuses on the development of algorithms that enable machines to learn from data and improve their performance over time without explicit programming. It’s the driving force behind the evolution of AI, allowing systems to adapt and enhance their capabilities. In other words, ML is the brain.

Decoding the Mystery: Understanding the Subtle Distinction between AI and ML

While AI is the overarching concept, ML serves as its dynamic engine. Think of AI as the broader goal of autonomous machine intelligence, and ML as the specific method used to achieve that goal. AI seeks to create machines capable of mimicking human intelligence, whereas ML provides the means for machines to learn and evolve through experience.

In essence, all machine learning is AI, but not all AI is machine learning.

The Limitations in Action: What AI and ML Still Can't Achieve

AI and ML are impressive, but they’re not superheroes. There are still aspects they struggle to fully comprehend.

1. Common Sense and Creativity

Even the most advanced AI and ML systems face challenges in understanding common sense and showcasing true creativity. While they excel at analyzing patterns and producing outcomes from available data, the innate spark of intuition and the capacity to navigate intricate, unexplored territories remain distinctly human attributes.

2. Emotional Intelligence

Understanding and responding to human emotions is a skill deeply embedded in our biological fabric. AI and ML models may recognize emotions to some extent, but the profound nuances of empathy, sarcasm, or subtle emotional cues often elude their understanding.

3. Ethical Decision-Making

AI systems lack inherent ethical reasoning. They operate based on algorithms and data, without an intrinsic moral compass. The responsibility for ethical decision-making still rests on human shoulders, raising concerns about biases embedded in training data and the potential for unintended consequences.

Beyond the Hype: What AI Isn't (Yet)

As society navigates the world of AI and ML integration, it’s crucial to dispel some common misconceptions and clarify what these technologies are not poised to currently achieve.

1. Complete Autonomy

Despite rapid advancements, AI systems are far from achieving full autonomy. They require human oversight and intervention, particularly in critical decision-making scenarios. The notion of machines operating independently without human involvement remains an aspiration.

2. Cure-All Solution

AI is a powerful tool, but it is not a solution for all problems. While it excels in data analysis and pattern recognition, it cannot replace the nuanced understanding, adaptability, and holistic thinking inherent in human problem-solving. Human collaboration remains integral for addressing complex challenges.

3. Replacement of Human Jobs

The fear of widespread job displacement due to AI and ML is a common misconception. While automation may streamline certain tasks, it also opens up new avenues for employment and creativity. The symbiotic relationship between humans and AI promises a future where these technologies augment our capabilities rather than replace them.

Conclusion

In a nutshell, AI and ML are like our sidekicks in this tech adventure. As we continue this technological odyssey, it is essential to approach these advancements with a nuanced understanding, appreciating what AI and ML can achieve while acknowledging their current boundaries.

The future lies in a collaborative environment where humans and machines complement each other’s strengths, paving the way for innovation that aligns with our values, ethics, and aspirations. The journey ahead holds the promise of unprecedented possibilities, and as we navigate this evolving landscape, the synergy between human intelligence and artificial ingenuity will shape the destiny of our technological future.

#ai #ml #machinelearning #automation #innovation

If you’re looking for a SR&ED consultant for AI development or other computer science research endeavours please feel free to reach out to us for a complimentary initial consultation to determine how SR&ED tax credits could be helping your business.

8,500

Number of Projects

500M

Total Claim Expenditures

96.5%

Successful Claims